Switching and Bridging RouterOS v7 Book

Study material for the MTCSWE Certification Course updated to RouterOS v7

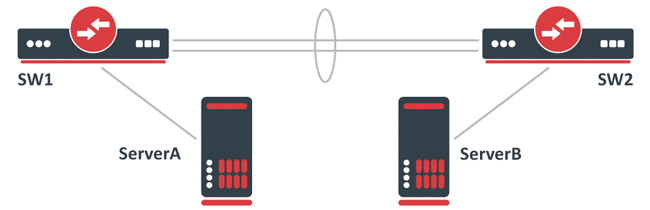

Stage: A LAG (Link Aggregation Group) interface has been created to increase the total bandwidth between two network nodes, usually switches.

To test that the LAG interface is working correctly, two servers transferring data have been connected, commonly using the network performance measurement tool Iperf.

At the end of the article you will find a small test that will allow you assess the knowledge acquired in this reading

For example, a LAG interface may have been created from two Gigabit Ethernet ports, providing a virtual interface capable of balancing traffic across both interfaces and theoretically achieving 2Gbps throughput.

The servers, in this case, are connected using a 10Gbps interface, such as SFP+.

/interface bonding

add mode=802.3ad name=bond1 slaves=ether1,ether2

/interface bridge

add name=bridge1

/interface bridge port

add bridge=bridge1 interface=bond1

add bridge=bridge1 interface=sfp-sfpplus1

After initial tests, it is observed that the network performance never exceeds the 1Gbps limit, although the CPU load on the servers and network nodes (switches) is low. This is because LACP (802.3ad) uses a broadcast hash policy to determine whether traffic can be balanced over multiple members of the LAG.

In this case, a LAG interface does not create a 2Gbps interface, but rather an interface that can balance traffic over multiple slave interfaces when possible.

For each packet a transmission hash is generated, which determines which member of the LAG the packet will be sent through, thus preventing packets from getting out of order.

There is the option to select the transmission hash policy, which typically allows you to choose between Layer 2 (MAC), Layer 3 (IP), and Layer 4 (Port).

On RouterOS, this can be selected using the transmit-hash-policy parameter. In this case, the transmission hash is the same since packets are sent to the same MAC address as well as the same IP address and Iperf also uses the same port, thus generating the same transmission hash for all packets and preventing load balancing between LAG members.

It should be noted that packets will not always be balanced over LAG members even when the destination is different, since the standardized transmission hash policy may generate the same transmission hash for different destinations.

Select the appropriate transmission hash policy and properly test network performance.

The easiest way to test such configurations is to use multiple targets. For example, instead of sending data to a single server, data should be sent to multiple servers.

This will generate a different transmission hash for each packet and make load balancing between LAG members possible.

In some cases, you might consider changing the bonding interface mode to increase overall performance.

For UDP traffic, balance-rr mode might be sufficient, but may cause problems for TCP traffic.

You can read more about selecting the right mode for your setup here.

The choice of bonding mode is crucial. While balance-rr (round-robin) may be effective for UDP traffic, it may not be ideal for TCP due to the possibility of packet reordering. Therefore, it is important to consider the type of traffic that will predominate in the network when choosing the bonding mode.

Other aspects of the network configuration can also influence LAG performance. For example, switch configuration, hardware capabilities, and network policies can affect how traffic is handled across LAG interfaces.

It is essential to implement monitoring and diagnostic tools to better understand how traffic is behaving through the LAG. Tools like Wireshark or even diagnostic features built into switches can provide valuable information.

Although the LAG interface can theoretically achieve a bandwidth of 2Gbps in this scenario, it must be remembered that the actual performance can be affected by multiple factors, such as the quality of the cabling, the distance between the devices and the hardware configuration itself.

Testing with different configurations and traffic types can help identify the best configuration for a specific environment. This could include varying the destination IP address, port, or even the type of traffic (TCP vs UDP).

Ensuring that both switches and servers are running the latest, most stable version of their firmware and software can resolve previously unidentified issues and improve overall performance.

Load balancing on a LAG interface is a complex process that requires careful configuration and a detailed understanding of the network and its components.

Through proper transmission hashing policy selection and extensive testing, network performance can be optimized and ensure that available resources are used efficiently.

Additionally, staying abreast of updates and best practices in network configuration can significantly contribute to the effectiveness and stability of your network environment.

Study material for the MTCSWE Certification Course updated to RouterOS v7

Av. Juan T. Marengo and J. Orrantia

Professional Center Building, Office 507

Guayaquil. Ecuador

Zip Code 090505

to our weekly newsletters

Copyright © 2024 abcxperts.com – All Rights Reserved

Take advantage of the Three Kings Day discount code!

Take advantage of the New Year's Eve discount code!

Take advantage of the discount code for Christmas!!!

all MikroTik OnLine courses

all Academy courses

all MikroTik Books and Book Packs

Take advantage of the discount codes for Cyber Week!!!

all MikroTik OnLine courses

all Academy courses

all MikroTik Books and Book Packs

Take advantage of the discount codes for Black Friday!!!

**Codes are applied in the shopping cart

Take advantage of discount codes for Halloween.

Codes are applied in the shopping cart

11% discount on all MikroTik OnLine courses

11%

30% discount on all Academy courses

30%

25% discount on all MikroTik Books and Book Packs

25%